Deploying Firefox and Thunderbird Policies to Prevent auto-updates and Tune Other Features

Long-time Firefox and Thunderbird user here. I’ve tried dozens and dozens of other browsers, including the much lauded Google Chrome, but always come back to Firefox. It’s just much faster, lighter on memory, 100x more feature rich, flexible and more secure than the alternatives. Chrome by comparison, is slow, an extreme memory hog, questionable security model, and lacks any powerful features that I’ve come to user over the years.

I tend to run the latest “Developer” or “Nightly” editions of these tools, and by doing so, I agree to certain constraints (daily, enforced upgrades being one example), but with that sometimes comes product changes that cause new, undiscovered issues, breakage and undefined behavior.

My Thunderbird mail folders for example, go back 20 years and contain well over 200,000 archived and active emails. I’ve purged all of the garbage, junk, unnecessary emails as they come in, being a big proponent of Merlin Mann’s “Inbox Zero” methodology for almost 15 years, but it’s important that mail be available and accessible on-demand. Something that breaks my ability to read an IMAP folder or search across those folders and tags, would not be good.

Enter Policies!

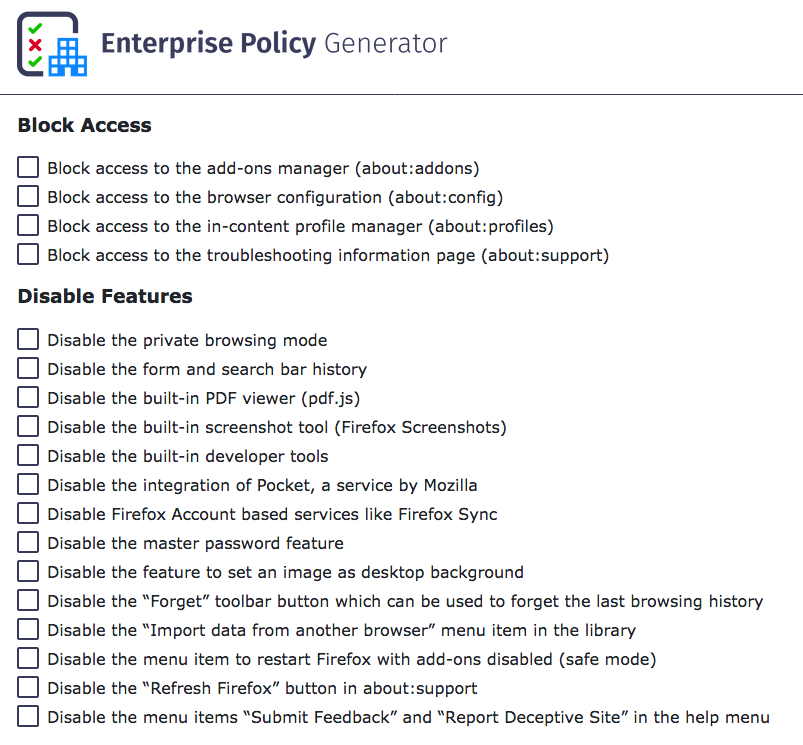

With policies deployed, you can govern what behavior is turned on, off and supported by your Firefox browser or Thunderbird mail client. For Firefox, there’s an easy add-on called “Enterprise Policy Generator” written by Sören Hentzschel that I use to start off the policies I’m interested in. Here’s just a small sample of what’s available in the tool:

Two of the first items I turn off, is the use of “Pocket” and the constantly daily upgrade notices. I do upgrade frequently, but I make sure I back up my profile, add-ons and browser data before testing an upgrade, so I have a means to downgrade if the new version breaks my add-ons or use of the browser. To do that, you can create a policy that disables these with the EPG, or you can just create a policies.json and add the following to it:

{

"policies": {

"DisableAppUpdate": true

}

}This will stop the browser from requesting updates on a daily basis. There is a feature in Firefox under about:config called app.update.auto which can be set to “False”, but it doesn’t work. Likewise, blanking out the app.update.url in the same configuration pane does not work either. The only way to do this, is to deploy a policy that forbids it.

The policies.json file has to go into a specific directory in the application directory, not the user’s profile (where it could be altered or modified by each user). Here’s where those need to go:

On macOS

/Applications/Firefox Developer Edition.app/Contents/Resources/distribution

On Linux

If you’re using packages:

/usr/lib/firefox/distribution

If you’re using the tarball or nightly releases:

/opt/firefox/distribution

On Microsoft Windows

C:\Program Files\Firefox Developer Edition\distribution

The important part is that it lives in a new directory called distribution inside the same directory that holds the main Firefox data files. You’ll need to create this directory if it doesn’t already exist. For Thunderbird, the process is similar, just a slightly different directory:

On macOS:

/Applications/Thunderbird.app/Contents/Resources/distribution

or

/Applications/Thunderbird Daily.app/Contents/Resources/distribution

Follow the same model and paths you did with Firefox for Linux and Microsoft Windows.

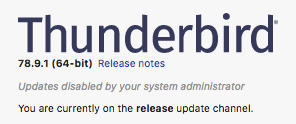

You’ll know if you put the policies.json in the correct directory, if you close and relaunch your Firefox or Thunderbird client, go to Help -> About, and see the following, near the top of the About dialog:

Here is a copy of an expanded policies.json that I use on my production systems:

{

"policies": {

"DisableAppUpdate": true,

"DisableFeedbackCommands": true,

"DisableFirefoxStudies": true,

"DisablePocket": true,

"DisableSystemAddonUpdate": true,

"DisableTelemetry": true,

"ExtensionUpdate": false,

"NetworkPrediction": true,

"Preferences": {

"browser.fixup.dns_first_for_single_words": true,

"browser.tabs.warnOnClose": true

},

"PromptForDownloadLocation": true

}

}You can use this for both Firefox and Thunderbird.

If you want a full breakdown of every possible policy item, you can visit the Mozilla Policy Templates github page for detailed explanations.

While we’re on the subject of Git, you might also want to investigate using Git to manage these policies and configurations, so you can easily deploy them across multiple machines that you use your browser or mail client in.

Hope that helps. Good luck!

The Top 4 dating sites out there; Match.com, Plenty of Fish, Tinder and OkCupid are so completely overrun with fraud now, it’s appalling.

The Top 4 dating sites out there; Match.com, Plenty of Fish, Tinder and OkCupid are so completely overrun with fraud now, it’s appalling.